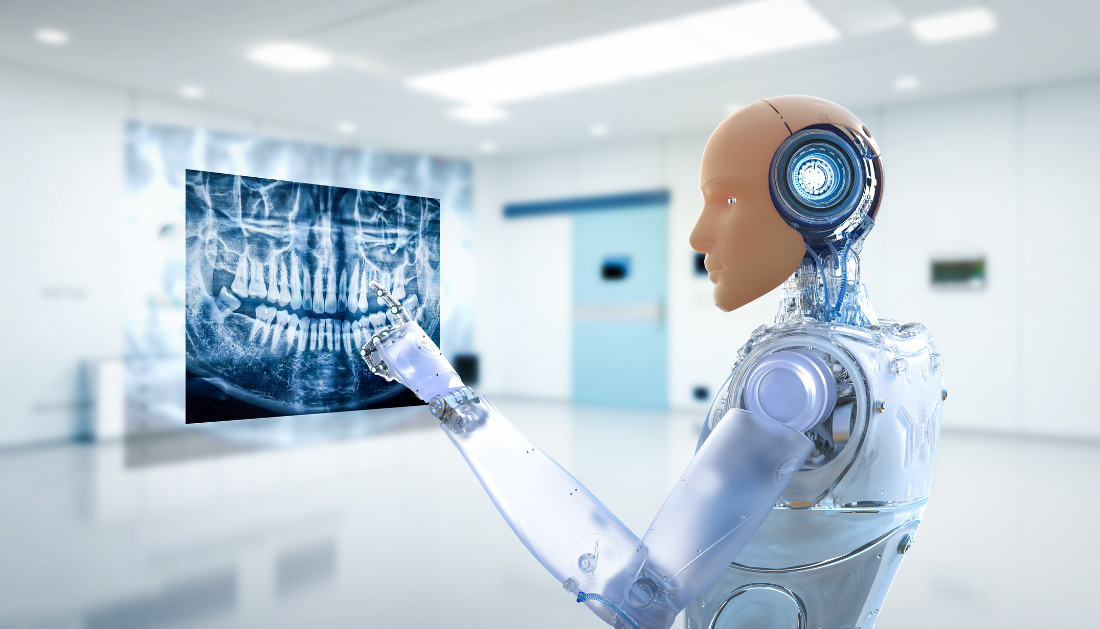

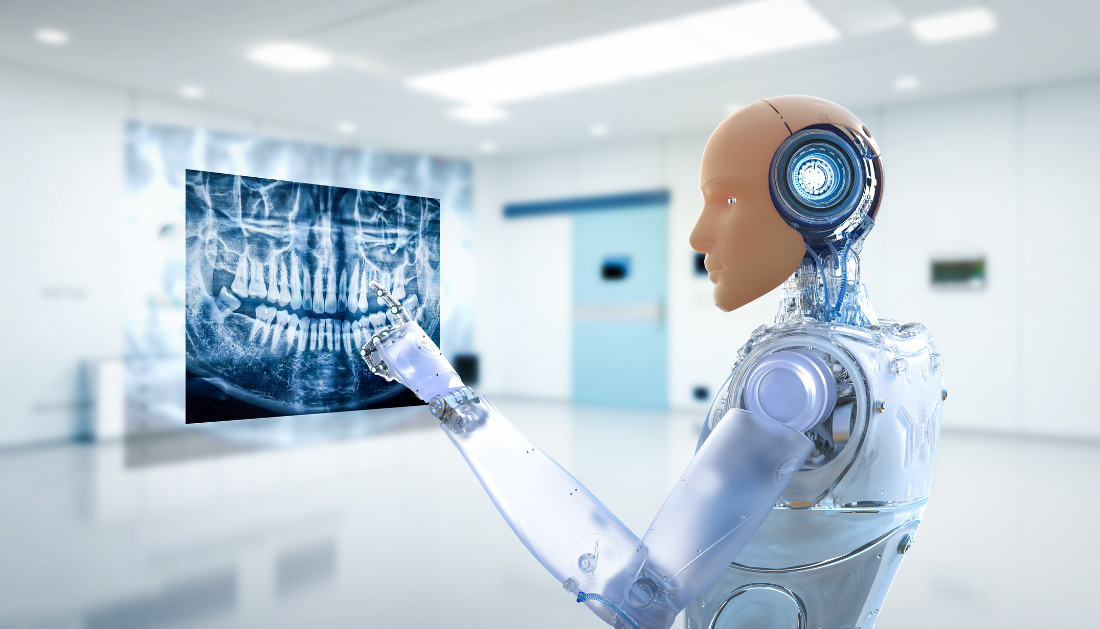

A groundbreaking study from Harvard Medical School and Stanford University, published in Nature Medicine, reveals that while AI doctors excel in standardized medical exams, they often struggle in real-world clinical conversations. The research introduces CRAFT-MD (Conversational Reasoning Assessment Framework for Testing in Medicine), a new evaluation tool designed to measure how well AI doctors or medical tools handle real-life patient interactions.

Large-language models (LLMs), such as ChatGPT, have shown promise in helping clinicians by triaging patients, collecting medical histories, and offering preliminary diagnoses. However, the study highlights a critical issue: AI models that perform well on structured, multiple-choice medical exams often falter when engaging in unstructured, back-and-forth conversations with patients.

CRAFT-MD evaluates AI performance in realistic patient scenarios by simulating clinical conversations where AI agents act as patients and graders. The assessment focuses on key factors such as information gathering, diagnostic reasoning, and conversational accuracy across 2,000 clinical vignettes spanning 12 medical specialties.

The results showed a clear pattern—AI tools often fail to ask the right follow-up questions, overlook essential details in patient history, and struggle to synthesize scattered information from open-ended exchanges. Their diagnostic accuracy dropped significantly compared to their performance on structured exams.

The research team, led by Pranav Rajpurkar, M.D., emphasized that dynamic doctor-patient interactions require AI to adapt, ask critical questions, and piece together fragmented information—abilities current AI tools struggle to master.

To address these limitations, the study recommends:

- Designing AI models with better conversational reasoning capabilities.

- Using open-ended testing frameworks instead of rigid multiple-choice formats.

- Training AI to process verbal and non-verbal cues, including tone and body language.

- Integrating textual and non-textual data, such as images and lab results, into diagnostics.

The findings advocate for a hybrid evaluation approach, where AI agents complement human experts for more efficient, scalable testing.

As noted by Roxana Daneshjou, M.D., from Stanford University, “CRAFT-MD represents a major step forward, offering a real-world evaluation framework to ensure AI tools meet clinical standards before being deployed in healthcare settings.”

Join this webinar to learn how Artificial intelligence can help psychiatrists in the real world.

More Information: An evaluation framework for clinical use of large language models in patient interaction tasks, Nature Medicine (2024). DOI: 10.1038/s41591-024-03328-5

more recommended stories

Gum Recession from Snus Confirmed, Caries Risk Debated

Gum Recession from Snus Confirmed, Caries Risk DebatedKey Highlights Snus use is strongly.

Hypertensive Disorders of Pregnancy: Role of Daily Activity

Hypertensive Disorders of Pregnancy: Role of Daily ActivityKey Points Summary Limiting sedentary time.

Climate Change Drives Dengue Outbreaks Globally

Climate Change Drives Dengue Outbreaks GloballyKey Takeaways Extreme weather significantly increases.

Teen Driving Risks: Parents Underestimate Safety Threats

Teen Driving Risks: Parents Underestimate Safety ThreatsKey Takeaways Teen driving risks remain.

PFK Enzyme Dual Role in Metabolism and Cell Cycle

PFK Enzyme Dual Role in Metabolism and Cell CycleKey Highlights Phosphofructokinase (PFK Enzyme) shows.

Exercise During Chemotherapy Supports Brain Health

Exercise During Chemotherapy Supports Brain HealthKey Points Summary A nationwide clinical.

Invasive Cosmetic Procedures Raise Patient Safety Concerns

Invasive Cosmetic Procedures Raise Patient Safety ConcernsKey Summary Experts publishing in The.

Oligometastatic Pancreatic Cancer: New Global Consensus

Oligometastatic Pancreatic Cancer: New Global ConsensusKey Points Summary An international expert.

Endometriosis Screening Tool May Cut Diagnosis Delays

Endometriosis Screening Tool May Cut Diagnosis DelaysKey Points Researchers from the University.

Influenza Vulnerability Index Maps Flu Risk Across US States

Influenza Vulnerability Index Maps Flu Risk Across US StatesKey Points Researchers developed a new.

Leave a Comment